This step by step guide explains what is KVM, how to install and configure KVM in Ubuntu 20.04 server, and how to create and manage KVM guest machines with Virsh program.

Table of Contents

What is KVM?

KVM, short for Kernel-based Virtual Machine, is a FreeBSD and Linux kernel module that allows the kernel to act as a hypervisor. Starting from kernel version 2.6.20, KVM is merged into Linux kernel mainline.

Using KVM, you can easily setup a virtualization environment in a Linux machine and host a wide range of guest operating systems including Linux, Windows, BSD, Mac OS and many.

In this guide, we will look at how to install and configure KVM in Ubuntu 20.04 headless server. And also we will see how to create and manage KVM guest machines using Virsh command line utility.

Prerequisites

Before installing KVM, first make sure your system's processor supports hardware virtualization. We have documented a few different ways to identify whether a Linux system supports Virtualization in the following guide.

If your system supports hardware virtualization, continue the following steps.

1. Install And Configure KVM In Ubuntu 20.04 LTS

For the purpose of this guide, I will be using the following systems.

KVM virtualization server:

- OS – Ubuntu 20.04 LTS minimal server (No GUI)

- IP Address : 192.168.225.52/24

Remote Client:

- OS – Ubuntu 20.04 GNOME Desktop

First, let us install KVM in the Ubuntu server.

1.1. Install KVM in Ubuntu 20.04 LTS

Install Kvm and all required dependencies to setup a virtualization environment on your Ubuntu 20.04 LTS sever using command:

$ sudo apt install qemu qemu-kvm libvirt-clients libvirt-daemon-system virtinst bridge-utils

Here,

- qemu - A generic machine emulator and virtualizer,

- qemu-kvm - QEMU metapackage for KVM support (i.e. QEMU Full virtualization on x86 hardware),

- libvirt-clients - programs for the libvirt library,

- libvirt-daemon-system - Libvirt daemon configuration files,

- virtinst - programs to create and clone virtual machines,

- bridge-utils - utilities for configuring the Linux Ethernet bridge.

Once KVM is installed, start libvertd service (If it is not started already):

$ sudo systemctl enable libvirtd

$ sudo systemctl start libvirtd

Check the status of libvirtd service with command:

$ systemctl status libvirtd

Sample output:

● libvirtd.service - Virtualization daemon Loaded: loaded (/lib/systemd/system/libvirtd.service; enabled; vendor preset: enabled) Active: active (running) since Sat 2020-07-04 08:13:41 UTC; 7min ago TriggeredBy: ● libvirtd-ro.socket ● libvirtd-admin.socket ● libvirtd.socket Docs: man:libvirtd(8) https://libvirt.org Main PID: 4492 (libvirtd) Tasks: 19 (limit: 32768) Memory: 12.9M CGroup: /system.slice/libvirtd.service ├─4492 /usr/sbin/libvirtd ├─4641 /usr/sbin/dnsmasq --conf-file=/var/lib/libvirt/dnsmasq/default.conf --l> └─4642 /usr/sbin/dnsmasq --conf-file=/var/lib/libvirt/dnsmasq/default.conf --l> Jul 04 08:13:42 ubuntuserver dnsmasq[4641]: compile time options: IPv6 GNU-getopt DBus i18n> Jul 04 08:13:42 ubuntuserver dnsmasq-dhcp[4641]: DHCP, IP range 192.168.122.2 -- 192.168.12> Jul 04 08:13:42 ubuntuserver dnsmasq-dhcp[4641]: DHCP, sockets bound exclusively to interfa> Jul 04 08:13:42 ubuntuserver dnsmasq[4641]: reading /etc/resolv.conf Jul 04 08:13:42 ubuntuserver dnsmasq[4641]: using nameserver 127.0.0.53#53 Jul 04 08:13:42 ubuntuserver dnsmasq[4641]: read /etc/hosts - 7 addresses Jul 04 08:13:42 ubuntuserver dnsmasq[4641]: read /var/lib/libvirt/dnsmasq/default.addnhosts> Jul 04 08:13:42 ubuntuserver dnsmasq-dhcp[4641]: read /var/lib/libvirt/dnsmasq/default.host> Jul 04 08:13:42 ubuntuserver dnsmasq[4641]: reading /etc/resolv.conf Jul 04 08:13:42 ubuntuserver dnsmasq[4641]: using nameserver 127.0.0.53#53

Well, libvertd service has been enabled and started! Let us do the rest of the configuration now.

1.2. Setup Bridge networking with KVM in Ubuntu

A bridged network shares the real network interface of the host computer with other VMs to connect to the outside network. Therefore each VM can bind directly to any available IPv4 or IPv6 addresses, just like a physical computer.

By default KVM setups a private virtual bridge, so that all VMs can communicate with one another, within the host computer. It provides its own subnet and DHCP to configure the guest’s network and uses NAT to access the host network.

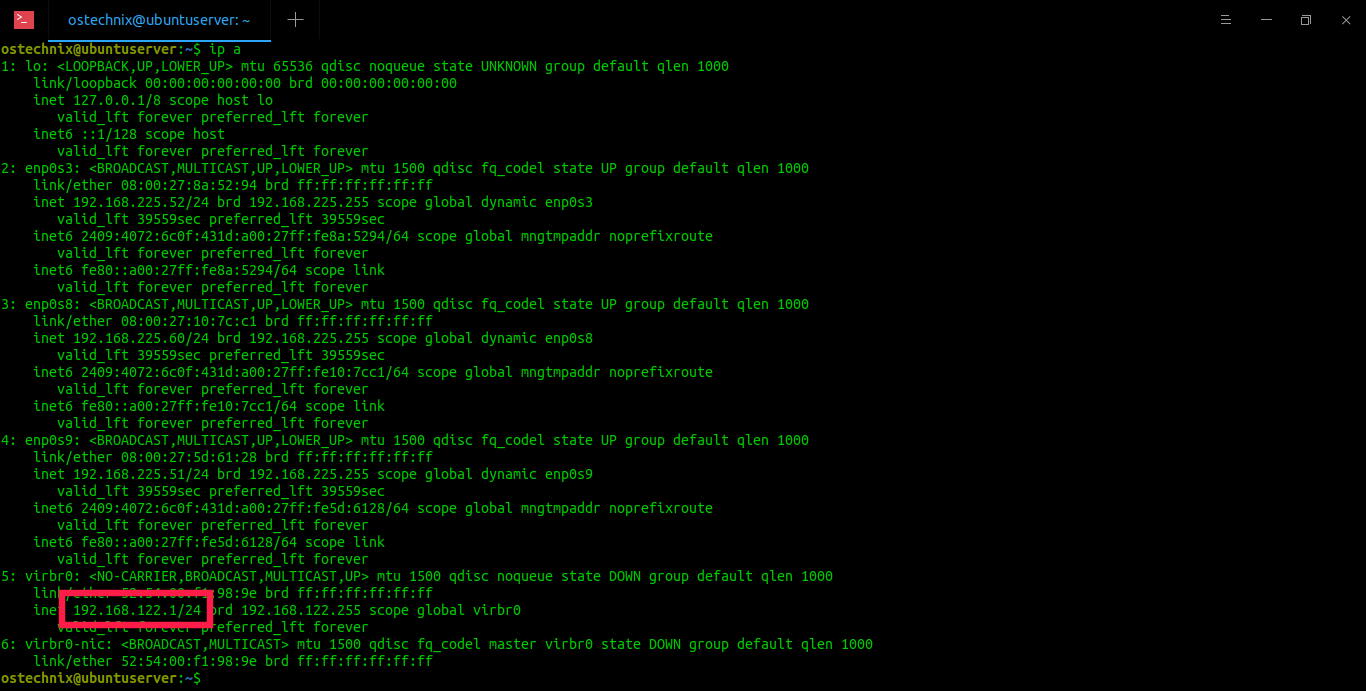

Have a look at the IP address of the KVM default virtual interfaces using "ip" command:

$ ip a

Sample output:

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp0s3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 08:00:27:8a:52:94 brd ff:ff:ff:ff:ff:ff

inet 192.168.225.52/24 brd 192.168.225.255 scope global dynamic enp0s3

valid_lft 39559sec preferred_lft 39559sec

inet6 2409:4072:6c0f:431d:a00:27ff:fe8a:5294/64 scope global mngtmpaddr noprefixroute

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe8a:5294/64 scope link

valid_lft forever preferred_lft forever

3: enp0s8: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 08:00:27:10:7c:c1 brd ff:ff:ff:ff:ff:ff

inet 192.168.225.60/24 brd 192.168.225.255 scope global dynamic enp0s8

valid_lft 39559sec preferred_lft 39559sec

inet6 2409:4072:6c0f:431d:a00:27ff:fe10:7cc1/64 scope global mngtmpaddr noprefixroute

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe10:7cc1/64 scope link

valid_lft forever preferred_lft forever

4: enp0s9: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 08:00:27:5d:61:28 brd ff:ff:ff:ff:ff:ff

inet 192.168.225.51/24 brd 192.168.225.255 scope global dynamic enp0s9

valid_lft 39559sec preferred_lft 39559sec

inet6 2409:4072:6c0f:431d:a00:27ff:fe5d:6128/64 scope global mngtmpaddr noprefixroute

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe5d:6128/64 scope link

valid_lft forever preferred_lft forever

5: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:f1:98:9e brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

6: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:f1:98:9e brd ff:ff:ff:ff:ff:ff

As you can see, KVM default network virbr0 uses 192.168.122.1/24 IP address. All the VMs will use an IP address in the 192.168.122.0/24 IP range and the host OS will be reachable at 192.168.122.1. You should be able to ssh into the host OS (at 192.168.122.1) from inside the guest OS and use scp to copy files back and forth.

It is OK if you only access the VMs inside from the host itself. However we can't access the VMs from other remote systems in the network.

Because they use different IP range i.e. 192.168.225.0/24 in my case. In order to access the VMs from other remote hosts, we must setup a public bridge that runs on the host network and uses whatever external DHCP server is on the host network.

To put this in layman terms, we are going to make all VMs to use the same IP series used by the host system.

Before setting up a public bridged network, we should disable Netfilter for performance and security reasons. Netfilter is currently enabled on bridges by default.

To disable netfilter, create a file called /etc/sysctl.d/bridge.conf:

$ sudo vi /etc/sysctl.d/bridge.conf

Add the following lines:

net.bridge.bridge-nf-call-ip6tables=0

net.bridge.bridge-nf-call-iptables=0

net.bridge.bridge-nf-call-arptables=0Save and close the file.

Then create another file called /etc/udev/rules.d/99-bridge.rules :

$ sudo vi /etc/udev/rules.d/99-bridge.rules

Add the following line:

ACTION=="add", SUBSYSTEM=="module", KERNEL=="br_netfilter", RUN+="/sbin/sysctl -p /etc/sysctl.d/bridge.conf"

This will set the necessary flags to disable netfilter on bridges at the appropriate place in system start-up. Save and close the file. Reboot your system to take effect these changes.

Next, we should disable the default networking that KVM installed for itself.

Find the name of KVM default network interfaces using "ip link" command:

$ ip link

Sample output:

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN mode DEFAULT group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

2: enp0s3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP mode DEFAULT group default qlen 1000

link/ether 08:00:27:8a:52:94 brd ff:ff:ff:ff:ff:ff

3: enp0s8: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP mode DEFAULT group default qlen 1000

link/ether 08:00:27:10:7c:c1 brd ff:ff:ff:ff:ff:ff

4: enp0s9: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP mode DEFAULT group default qlen 1000

link/ether 08:00:27:5d:61:28 brd ff:ff:ff:ff:ff:ff

5: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN mode DEFAULT group default qlen 1000

link/ether 52:54:00:1f:a2:e7 brd ff:ff:ff:ff:ff:ff

6: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN mode DEFAULT group default qlen 1000

link/ether 52:54:00:1f:a2:e7 brd ff:ff:ff:ff:ff:ff

As you see in the above output, the entries "virbr0" and "virbr0-nic" are the KVM networks.

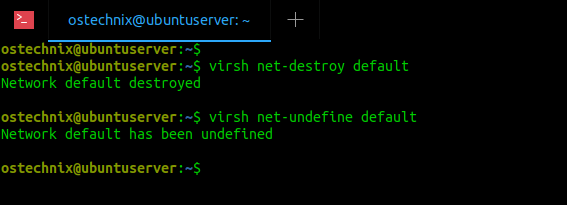

Let us remove the default KVM network with command:

$ virsh net-destroy default

Sample output:

Network default destroyed

Undefine the default network with command:

$ virsh net-undefine default

Sample output:

Network default has been undefined

If the above commands doesn't work for any reason, you can use these commands to disable and undefine KVM default network:

$ sudo ip link delete virbr0 type bridge

$ sudo ip link delete virbr0-nic

Now run "ip link" again to verify if the virbr0 and virbr0-nic interfaces are actually deleted:

$ ip link

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN mode DEFAULT group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

2: enp0s3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP mode DEFAULT group default qlen 1000

link/ether 08:00:27:8a:52:94 brd ff:ff:ff:ff:ff:ff

3: enp0s8: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP mode DEFAULT group default qlen 1000

link/ether 08:00:27:10:7c:c1 brd ff:ff:ff:ff:ff:ff

4: enp0s9: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP mode DEFAULT group default qlen 1000

link/ether 08:00:27:5d:61:28 brd ff:ff:ff:ff:ff:ffSee? The KVM default networks are gone.

Now, let us setup the KVM public bridge to use when creating a new VM.

Note:

Do not use wireless network interface cards for bridges. Most wireless interlaces do not support bridging. Always use wired network interfaces for seamless connectivity!

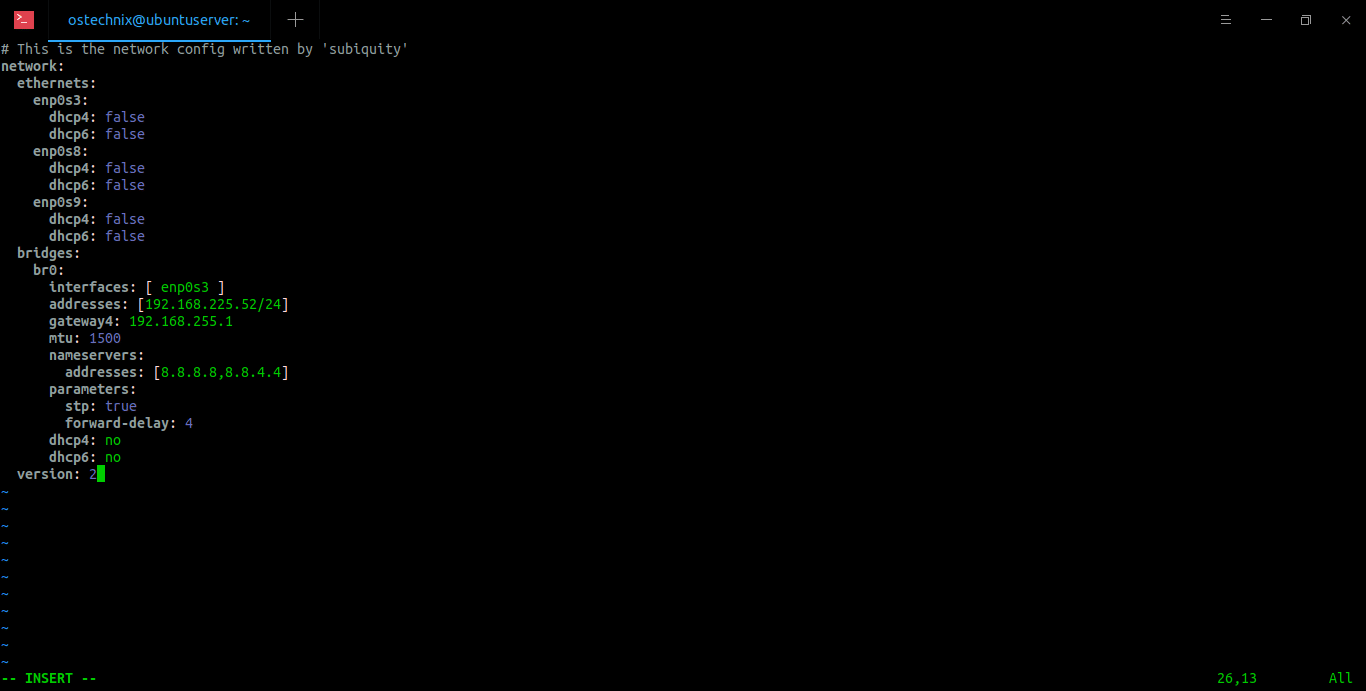

To create a network bridge in host, edit /etc/netplan/00-installer-config.yaml file and add the bridge details.

Here is the default contents of the 00-installer-config.yaml file in my Ubuntu 20.04 LTS server.

$ cat /etc/netplan/00-installer-config.yaml

# This is the network config written by 'subiquity'

network:

ethernets:

enp0s3:

dhcp4: true

enp0s8:

dhcp4: true

enp0s9:

dhcp4: true

version: 2As you see, I have three wired network interfaces namely enp0s3, enp0s8 and enp0s9 in my Ubuntu server.

Before editing this file, backup your existing /etc/netplan/00-installer-config.yaml file:

$ sudo cp /etc/netplan/00-installer-config.yaml{,.backup}Then edit the default config file using your favorite editor:

$ sudo vi /etc/netplan/00-installer-config.yaml

Add/modify it like below:

# This is the network config written by 'subiquity'

network:

ethernets:

enp0s3:

dhcp4: false

dhcp6: false

enp0s8:

dhcp4: false

dhcp6: false

enp0s9:

dhcp4: false

dhcp6: false

bridges:

br0:

interfaces: [ enp0s3 ]

addresses: [192.168.225.52/24]

gateway4: 192.168.225.1

mtu: 1500

nameservers:

addresses: [8.8.8.8,8.8.4.4]

parameters:

stp: true

forward-delay: 4

dhcp4: no

dhcp6: no

version: 2

Here, the bridge network interface "br0" is attached to host's network interface "enp0s3". The ip address of br0 is 192.168.225.52. The gateway is 192.168.225.1. I use Google DNS servers (8.8.8.8 and 8.8.4.4) to connect to Internet. Make sure the space indentation are exactly same as above. If the line indentations are not correct, the bridged network interface will not activate. Replace the above values that matches with your network.

After modifying the network config file, save and close it. Apply the changes by running the following command:

$ sudo netplan --debug apply

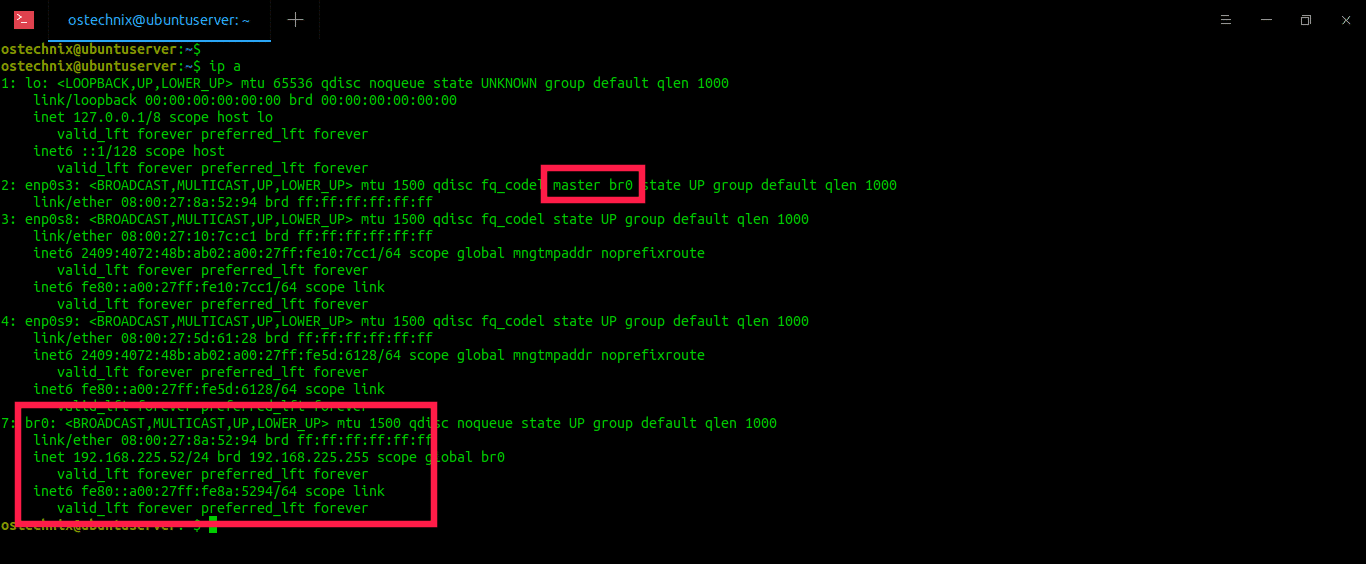

Now check if the IP address has been assigned to the bridge interface:

$ ip a

Sample output:

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp0s3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel master br0 state UP group default qlen 1000

link/ether 08:00:27:8a:52:94 brd ff:ff:ff:ff:ff:ff

3: enp0s8: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 08:00:27:10:7c:c1 brd ff:ff:ff:ff:ff:ff

inet6 2409:4072:48b:ab02:a00:27ff:fe10:7cc1/64 scope global mngtmpaddr noprefixroute

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe10:7cc1/64 scope link

valid_lft forever preferred_lft forever

4: enp0s9: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 08:00:27:5d:61:28 brd ff:ff:ff:ff:ff:ff

inet6 2409:4072:48b:ab02:a00:27ff:fe5d:6128/64 scope global mngtmpaddr noprefixroute

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe5d:6128/64 scope link

valid_lft forever preferred_lft forever

7: br0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 08:00:27:8a:52:94 brd ff:ff:ff:ff:ff:ff

inet 192.168.225.52/24 brd 192.168.225.255 scope global br0

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe8a:5294/64 scope link

valid_lft forever preferred_lft foreve

As you see in the above output, the bridged network interface br0 is assigned with IP address 192.168.225.52 and the enp0s3 entry now has "master br0" entry. It means that enp0s3 belongs to the bridge.

You can also use "brctl" command to show the bridge status:

$ brctl show br0

Sample output:

bridge name bridge id STP enabled interfaces br0 8000.0800278a5294 yes enp0s3

Now we should configure KVM to use this bridge. To do that, create a an XML file called host-bridge.xml :

$ vi host-bridge.xml

Add the following lines:

<network>

<name>host-bridge</name>

<forward mode="bridge"/>

<bridge name="br0"/>

</network>Run the following commands to start the newly created bridge and make it as default bridge for VMs:

$ virsh net-define host-bridge.xml

$ virsh net-start host-bridge$ virsh net-autostart host-bridge

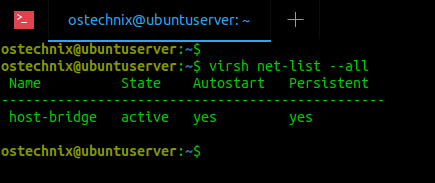

To verify if the bridge is active and started, run:

$ virsh net-list --all

Sample output:

Name State Autostart Persistent ------------------------------------------------ host-bridge active yes yes

Congratulations! We have successfully setup KVM bridge and it is active now.

Related read:

2. Create and manage KVM virtual machines using Virsh

We use "virsh" command line utility to manage virtual machines. The virsh program is used create, list, pause, restart, shutdown and delete VMs from command line.

By default, the virtual machine files and other related files are stored under /var/lib/libvirt/ location. The default path to store ISO images is /var/lib/libvirt/boot/. We can of course change these locations when installing a new VM.

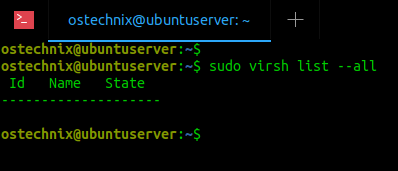

2.1. List all virtual machines

First, let us check if there are any virtual machines exists.

To view the list of all available virtual machines, run:

$ sudo virsh list --all

Sample output:

As you can see, there are no existing virtual machines.

2.2. Create KVM virtual machines

Let us create an Ubuntu 18.04 Virtual machine with 2 GB RAM, 1 CPU core, 10 GB Hdd. To do that, run:

$ sudo virt-install --name Ubuntu-18.04 --ram=2048 --vcpus=1 --cpu host --hvm --disk path=/var/lib/libvirt/images/ubuntu-18.04-vm1,size=10 --cdrom /home/ostechnix/ubuntu18.iso --network bridge=br0 --graphics vnc

Let us break down the above command and see what each option do.

- --name Ubuntu-18.04 : The name of the virtual machine

- --ram=2048 : Allocates 2 GB RAM to the VM.

- --vcpus=1 : Indicates the number of CPU cores in the VM.

- --cpu host : Optimizes the CPU properties for the VM by exposing the host’s CPU’s configuration to the guest.

- --hvm : Request the full hardware virtualization.

- --disk path=/var/lib/libvirt/images/ubuntu-18.04-vm1,size=10 : The location to save VM’s hdd and it’s size. In this case, I have allocated 10 GB hdd size.

- --cdrom /home/ostechnix/ubuntu18.iso : The location where you have the actual Ubuntu installer ISO image.

- --network bridge=br0 : Instruct the VM to use bridge network. If you don't configured bridge network, ignore this parameter.

- --graphics vnc : Allows VNC access to the VM from a remote client.

Sample output of the above command would be:

WARNING Graphics requested but DISPLAY is not set. Not running virt-viewer. WARNING No console to launch for the guest, defaulting to --wait -1 Starting install... Allocating 'ubuntu-18.04-vm1' | 10 GB 00:00:06 Domain installation still in progress. Waiting for installation to complete.

This message will keep showing until you connect to the VM from a remote system via any VNC application and complete the OS installation.

Since our KVM host system (Ubuntu server) doesn't has GUI, we can't continue the guest OS installation. So, I am going to use a spare machine that has GUI on it to complete the guest OS installation with the help of a VNC client.

We are done with the Ubuntu server here. The following steps should be performed on a client system.

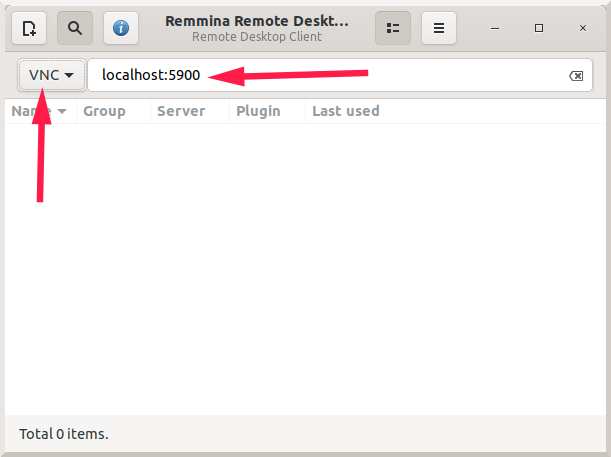

2.3. Access Virtual machines from remote systems via VNC client

Go to the remote systems that has graphical desktop environment and install any VNC client application if it is not installed already. I have a Ubuntu desktop with Remmina remote desktop client installed.

SSH into the KVM host system:

$ ssh ostechnix@192.168.225.52

Here,

- ostechnix is the name of the user in KVM host (Ubuntu 20.04 server)

- 192.168.225.52 is the IP address of KVM host.

Find the VNC port used by the running VM using command:

$ sudo virsh dumpxml Ubuntu-18.04 | grep vnc

Replace "Ubuntu-18.04" with your VM's name.

Sample output:

<graphics type='vnc' port='5900' autoport='yes' listen='127.0.0.1'>The VNC port numer is 5900.

Type the following SSH port forwarding command from your terminal:

$ ssh ostechnix@192.168.225.52 -L 5900:127.0.0.1:5900

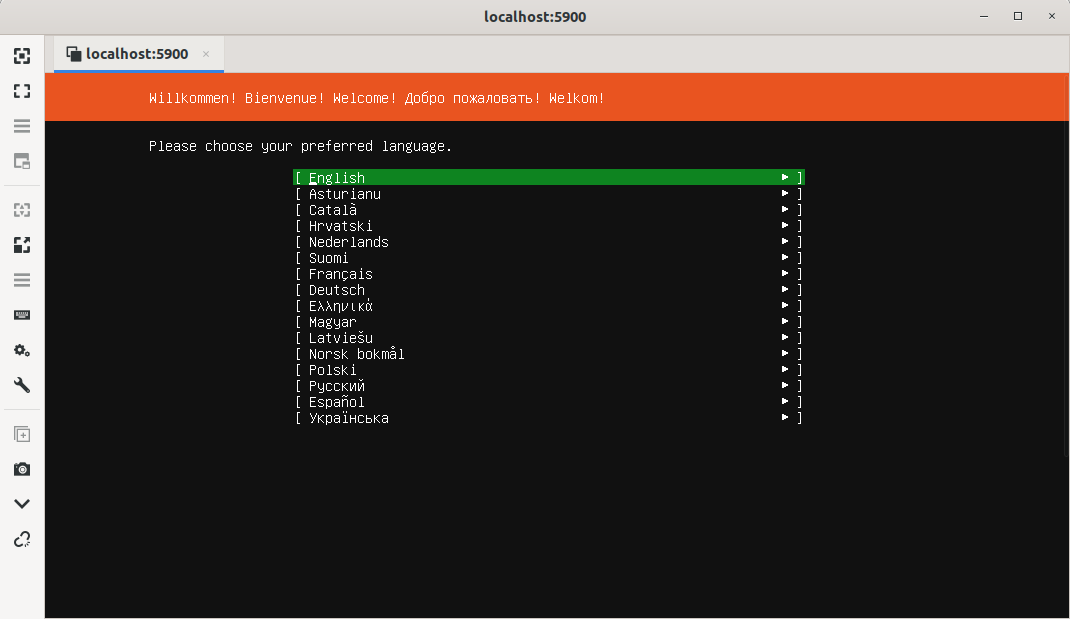

Launch the VNC client application and choose "VNC" protocol and then type "localhost:5900" in the address bar and finally hit ENTER:

The VNC application will now show you the guess OS installation window.

Just continue the guest OS installation. Once the installation is done, close the VNC application window.

2.4. List running VMs

Run "virt list" command to see the list of running VMs:

$ sudo virsh list

Sample output:

Id Name State ------------------------------ 2 Ubuntu-18.04 running

As you can see, Ubuntu 18.04 VM is currently running and its ID is 2.

2.5. Start VMs

To start a VM, run:

$ sudo virsh start Ubuntu-18.04

You can also use the VM's ID to start it:

$ sudo virsh start 2

2.6. Restart VMs

To restart a running VM, do:

$ sudo virsh reboot Ubuntu-18.04

Or,

$ sudo reboot 2

2.7. Pause VMs

To pause a running VM, do:

$ sudo suspend Ubuntu-18.04

Or,

$ sudo suspend 2

2.8. Resume VMs

To resume a suspended VM, do:

$ sudo virsh resume Ubuntu-18.04

Or,

$ sudo resume 2

2.9. Shutdown VMs

To power off a running VM, do:

$ sudo virsh shutdown Ubuntu-18.04

Or,

$ sudo shutdown 2

2.10. Delete VMs

To completely remove a VM, do:

$ sudo virsh undefine Ubuntu-18.04

$ sudo virsh destroy Ubuntu-18.04

Virsh has a lots of commands and options. To learn all of them, refer virsh help section:

$ virsh --help

3. Manage KVM guests graphically

Remembering all virsh commands is nearly impossible. If you are budding Linux admin, you may find it hard to perform all Kvm management operations from command line. No worries! There are a few web-based tools available to manage KVM guest machines graphically. The following guides explains how to manage Kvm guests using Cockpit and Virt-manager in detail.

- Manage KVM Virtual Machines Using Cockpit Web Console

- How To Manage KVM Virtual Machines With Virt-Manager

4. Enable Virsh Console Access For Virtual Machines

After creating the KVM guests, I can be able to access them via SSH, VNC client, Virt-viewer, Virt-manager and Cockpit web console etc. But I couldn't access them using "virsh console" command. To access KVM guests using "virsh console", refer the following guide:

Other KVM related guides

- Create A KVM Virtual Machine Using Qcow2 Image In Linux

- How To Migrate Virtualbox VMs Into KVM VMs In Linux

- Enable UEFI Support For KVM Virtual Machines In Linux

- How To Enable Nested Virtualization In KVM In Linux

- Display Virtualization Systems Stats With Virt-top In Linux

- How To Find The IP Address Of A KVM Virtual Machine

- How To Rename KVM Guest Virtual Machine

- Access And Modify Virtual Machine Disk Images With Libguestfs

- Quickly Build Virtual Machine Images With Virt-builder

- How To Rescue Virtual Machines With Virt-rescue

- How To Extend KVM Virtual Machine Disk Size In Linux

- Setup A Shared Folder Between KVM Host And Guest

- How To Change KVM Libvirt Default Storage Pool Location

- [Solved] Cannot access storage file, Permission denied Error in KVM Libvirt

- How To Export And Import KVM Virtual Machines In Linux

Conclusion

In this guide, we discussed how to install and configure KVM in Ubuntu 20.04 LTS server edition.

We also looked at how to create and manage KVM virtual machines from command line using virsh tool and using GUI tools Cockpit and Virt-manager.

Finally, we saw how to enable virsh console access for KVM virtual machines. At this stage, you should have fully working virtualization environment in your Ubuntu 20.04 server.

Resource:

9 comments

Thank a lot…. I’ve followed other tutorials but you are the only one mentioning about /etc/sysctl.d/bridge.conf

Now my network is working.

I had to change the command locally to SSH -L 15900:127.0.0.1:5900 myuser@myserverIP to get it working, thanks for the guide though it was fantastic.

Great guide it worked as a charm for me. Thank you very much. I have been trying to perform installs using KVM for the longest while but have not succeeded until i followed your clear and concise guide.

`qemu` is now just a dummy package and can be omitted from the install.

In the apt command, add the option –no-install-recommends so it doesn’t install other non-headless package such as X11

Is it required to have more than 1 NIC on the machine?

No. One NIC is enough.

Great info and summary – however, one thing missing:

Where to save the XML file you refer to?

“Now we should configure KVM to use this bridge. To do that, create a an XML file called host-bridge.xml :”

Save it anywhere and specify the correct path when defining network bridge.

$ virsh net-define /path/to/host-bridge.xml